Biography

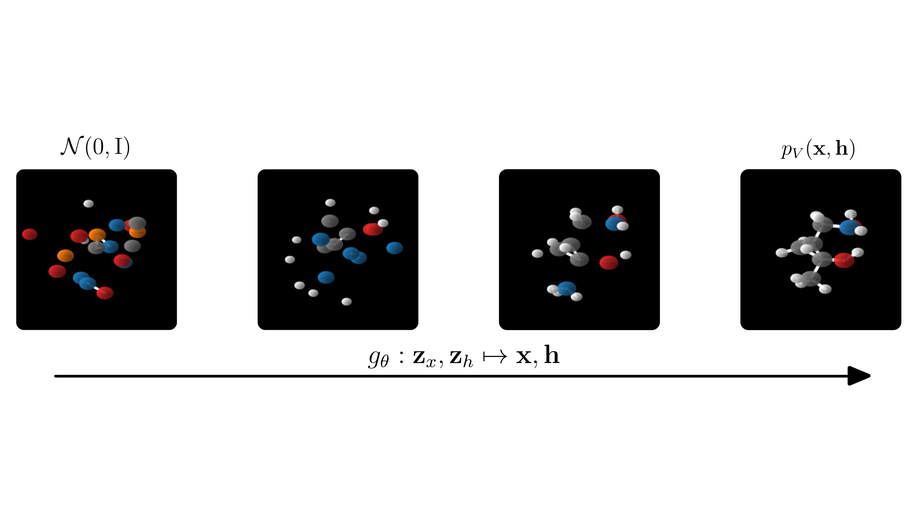

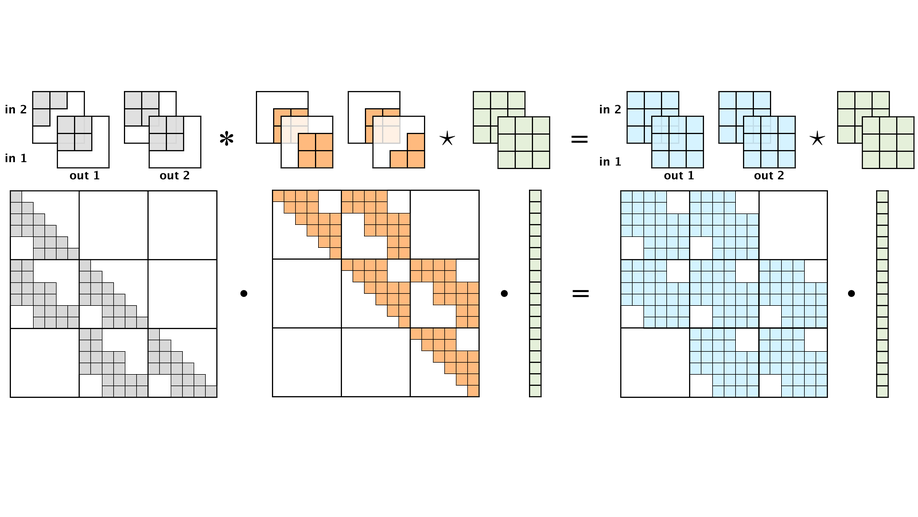

I am a researcher at Google DeepMind in Amsterdam, where I work on generative modelling. My recent research focuses on diffusion models, efficient sampling and distillation, and equivariant neural networks for structured data such as molecules and graphs.

Before joining Google in 2022, I completed my PhD at the University of Amsterdam with Max Welling in the UvA-Bosch Delta Lab. During my PhD I worked on normalizing flows, discrete generative models, and equivariant deep learning.

Interests

- Generative Modelling

- Diffusion Models

- Equivariant Deep Learning

- Artificial Intelligence

Education

PhD in Machine Learning, 2022

University of Amsterdam

MSc in Artificial Intelligence, 2017

University of Amsterdam

BSc in Aerospace Engineering, 2015

Delft University of Technology